Thorium is engineered for AI applications where conventional embedded systems no longer suffice and where cloud or server-based solutions are technically or economically impractical. It combines extreme compute performance with industrial-grade mechanics, enabling complex AI models to run directly at the edge – deterministic, low-latency, and independent of centralized infrastructure.

- Server-class AI performance at the edge: Over 2000 FP4 TFLOPS enable deployment of very large models directly in the field.

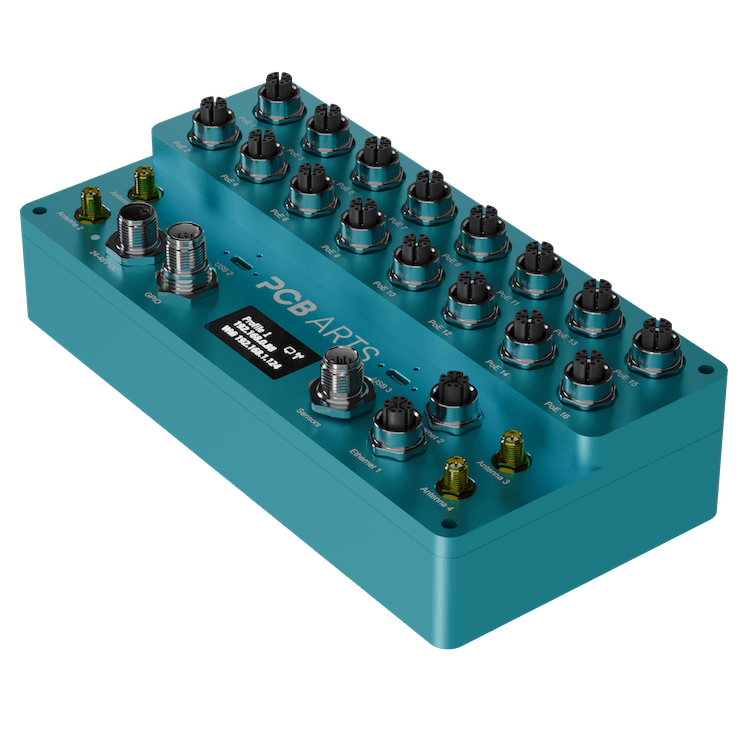

- High system integration: Compute, networking, storage, and security combined in a compact, industrial-grade platform.

- Built for demanding environments: IP67 rating and rugged industrial connectors.

- Scalable & production-ready: Designed for series products and long-term availability.

Thorium: Server-class AI performance. Directly at the edge.

Thorium is engineered for AI applications where conventional embedded systems no longer suffice and where cloud or server-based solutions are technically or economically impractical. It combines extreme compute performance with industrial-grade mechanics, enabling complex AI models to run directly at the edge – deterministic, low-latency, and independent of centralized infrastructure.

- Server-class AI performance at the edge: Over 2000 FP4 TFLOPS enable deployment of very large models directly in the field.

- High system integration: Compute, networking, storage, and security combined in a compact, industrial-grade platform.

- Built for demanding environments: IP67 rating and rugged industrial connectors.

- Scalable & production-ready: Designed for series products and long-term availability.

Accelerated by NVIDIA Jetson

NVIDIA Jetson is the leading AI-at-the-edge computing platform with over half a million developers. With pre-trained AI models, developer SDKs and support for cloud-native technologies across the full Jetson lineup, manufacturers of intelligent machines and AI developers can build and deploy high-quality, software-defined features on embedded and edge devices targeting robotics, AIoT, smart cities, healthcare, industrial applications, and more. Cloud-native support helps manufacturers and developers implement frequent improvements, improve accuracy, and use the latest features with NVIDIA Jetson-based edge AI devices.

| Jetson T5000 | Jetson T4000 | |

|---|---|---|

| KI Performance | 2070 TOPS | 1200 TOPS |

| GPU | 2560-core NVIDIA Blackwell GPU with 96 Tensor Cores | 1536-core NVIDIA Blackwell GPU with 64 Tensor Cores |

| CPU | 14-core Arm® Neoverse®-V3AE 64-bit CPU | 12-core Arm® Neoverse®-V3AE 64-bit CPU |

| RAM | 128 GB 256-bit LPDDR5X | 64 GB 256-bit LPDDR5X |

| Storage | 2x NVMe M-Key Support 2280 & 2242 | |

| Power consumption | 40W - 130W | 40W - 70W |

Technical details

| Supported NVIDIA SOMs | Jetson T5000 or T4000 |

| Operating System | NVIDIA JetPack™ SDK 7.0: Ubuntu 24.04 (Linux Kernel 6.8) |

| Power Supply | 24V – 48V DC |

| Power Consumption | Max. 460 W |

| Cooling | Passive Cooling: Customizable by the customer or implemented by PCB Arts Active Cooling: With DIN-rail option or wall-mount solution |

| Standard Interfaces | 2× USB-C USB 3.2 (10 Gbit/s, screw terminal), 1× USB-C USB 2.0 for flashing, 1× Gigabit Ethernet (M12), 1× 10 Gbit/s Ethernet (M12) |

| Integrated Features | 8× opto-isolated I/Os, 2× opto-isolated CAN, 1× opto-isolated I²C, IMU: ICM-42670-P, Crypto Chip: ATSHA204A, external RTC |

| M.2 Slots | 1× M-Key 2280 for NVMe, 1× M-Key 2242 for NVMe, 1× E-Key 2230 for WiFi, 1× B-Key for LTE/5G |

| Debug Header | Via USB-C with PCB Arts adapter |

| Display Output | Via USB-C with PCB Arts adapter |

| Housing | Anodized aluminum |

| Dimensions | 210 mm × 103 mm × 61 mm Height TBD mm (with active cooling solution) |

| Weight | TBD |

| Operating Temperature | -20 °C ... +60 °C (with integrated heater) |

| IP Rating | IP67 |

| Certifications | CE, EMC, RoHS |